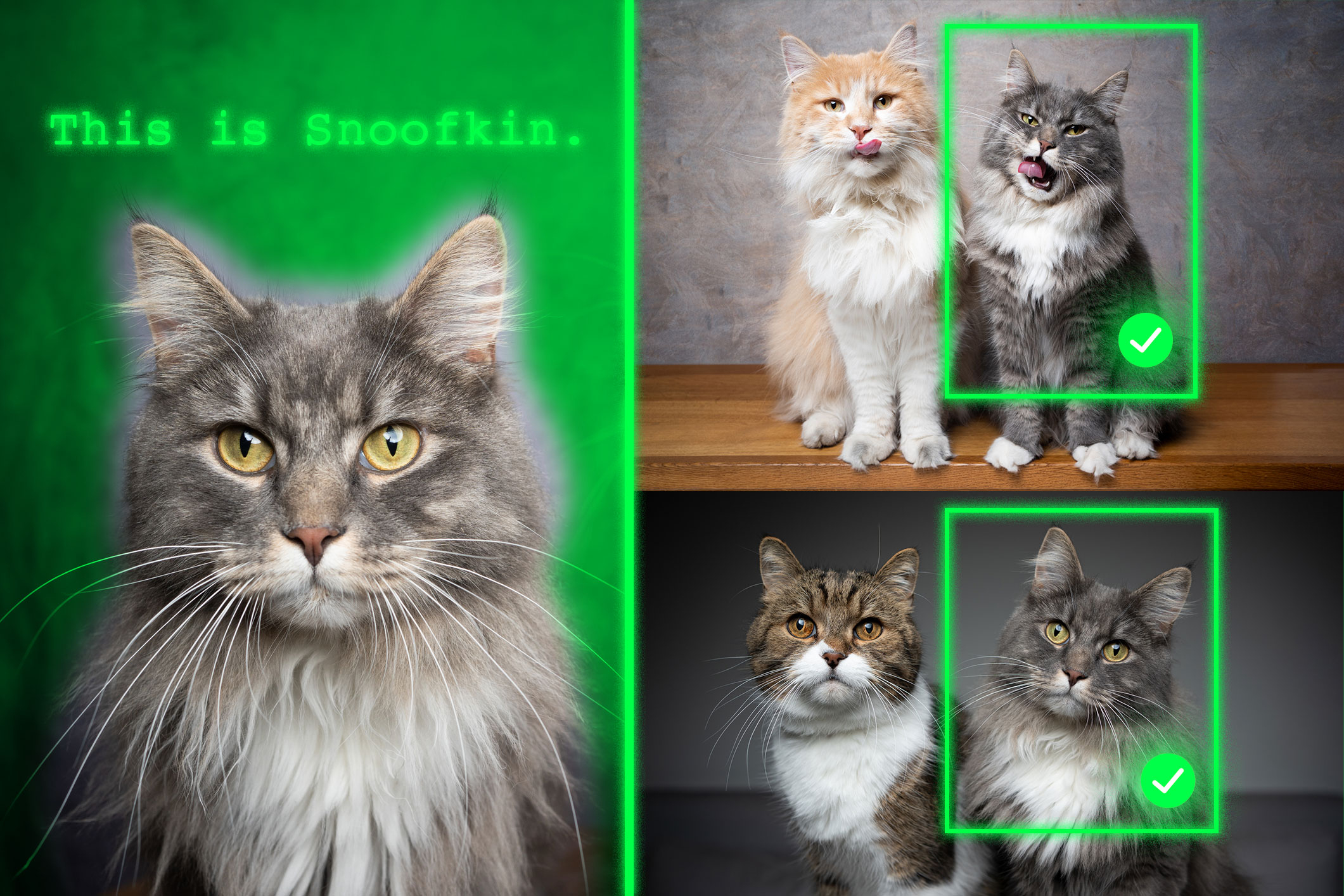

Method teaches generative AI models to locate personalized objects

Say a person takes their French Bulldog, Bowser, to the dog park. Identifying Bowser as he plays among the other canines is easy for the dog-owner to do while onsite.

But if someone wants to use a generative AI model like GPT-5 to monitor their pet while they are at work, the model could fail at this basic task. Vision-language models like GPT-5 often excel at recognizing general objects, like a dog, but they perform poorly at locating personalized objects, like Bowser the French Bulldog.

To address this shortcoming, researchers from MIT and the MIT-IBM Watson AI Lab have introduced a new training method that teaches vision-language models to localize personalized objects in a scene.

Their method uses carefully prepared video-tracking data in which the same object is tracked across multiple frames. They designed the dataset so the model must focus on contextual clues to identify the personalized object, rather than relying on knowledge it previously memorized.

When given a few example images showing a personalized object, like someone’s pet, the retrained model is better able to identify the location of that same pet in a new image.

Models retrained with their method outperformed state-of-the-art systems at this task. Importantly, their technique leaves the rest of the model’s general abilities intact.

This new approach could help future AI systems track specific objects across time, like a child’s backpack, or localize objects of interest, such as a species of animal in ecological monitoring. It could also aid in the development of AI-driven assistive technologies that help visually impaired users find certain items in a room.

“Ultimately, we want these models to be able to learn from context, just like humans do. If a model can do this well, rather than retraining it for each new task, we could just provide a few examples and it would infer how to perform the task from that context. This is a very powerful ability,” says Jehanzeb Mirza, an MIT postdoc and senior author of a paper on this technique.

Mirza is joined on the paper by co-lead authors Sivan Doveh, a graduate student at Weizmann Institute of Science; and Nimrod Shabtay, a researcher at IBM Research; James Glass, a senior research scientist and the head of the Spoken Language Systems Group in the MIT Computer Science and Artificial Intelligence Laboratory (CSAIL); and others. The work will be presented at the International Conference on Computer Vision.

An unexpected shortcoming

Researchers have found that large language models (LLMs) can excel at learning from context. If they feed an LLM a few examples of a task, like addition problems, it can learn to answer new addition problems based on the context that has been provided.

A vision-language model (VLM) is essentially an LLM with a visual component connected to it, so the MIT researchers thought it would inherit the LLM’s in-context learning capabilities. But this is not the case.

“The research community has not been able to find a black-and-white answer to this particular problem yet. The bottleneck could arise from the fact that some visual information is lost in the process of merging the two components together, but we just don’t know,” Mirza says.

The researchers set out to improve VLMs abilities to do in-context localization, which involves finding a specific object in a new image. They focused on the data used to retrain existing VLMs for a new task, a process called fine-tuning.

Typical fine-tuning data are gathered from random sources and depict collections of everyday objects. One image might contain cars parked on a street, while another includes a bouquet of flowers.

“There is no real coherence in these data, so the model never learns to recognize the same object in multiple images,” he says.

To fix this problem, the researchers developed a new dataset by curating samples from existing video-tracking data. These data are video clips showing the same object moving through a scene, like a tiger walking across a grassland.

They cut frames from these videos and structured the dataset so each input would consist of multiple images showing the same object in different contexts, with example questions and answers about its location.

“By using multiple images of the same object in different contexts, we encourage the model to consistently localize that object of interest by focusing on the context,” Mirza explains.

Forcing the focus

But the researchers found that VLMs tend to cheat. Instead of answering based on context clues, they will identify the object using knowledge gained during pretraining.

For instance, since the model already learned that an image of a tiger and the label “tiger” are correlated, it could identify the tiger crossing the grassland based on this pretrained knowledge, instead of inferring from context.

To solve this problem, the researchers used pseudo-names rather than actual object category names in the dataset. In this case, they changed the name of the tiger to “Charlie.”

“It took us a while to figure out how to prevent the model from cheating. But we changed the game for the model. The model does not know that ‘Charlie’ can be a tiger, so it is forced to look at the context,” he says.

The researchers also faced challenges in finding the best way to prepare the data. If the frames are too close together, the background would not change enough to provide data diversity.

In the end, finetuning VLMs with this new dataset improved accuracy at personalized localization by about 12 percent on average. When they included the dataset with pseudo-names, the performance gains reached 21 percent.

As model size increases, their technique leads to greater performance gains.

In the future, the researchers want to study possible reasons VLMs don’t inherit in-context learning capabilities from their base LLMs. In addition, they plan to explore additional mechanisms to improve the performance of a VLM without the need to retrain it with new data.

“This work reframes few-shot personalized object localization — adapting on the fly to the same object across new scenes — as an instruction-tuning problem and uses video-tracking sequences to teach VLMs to localize based on visual context rather than class priors. It also introduces the first benchmark for this setting with solid gains across open and proprietary VLMs. Given the immense significance of quick, instance-specific grounding — often without finetuning — for users of real-world workflows (such as robotics, augmented reality assistants, creative tools, etc.), the practical, data-centric recipe offered by this work can help enhance the widespread adoption of vision-language foundation models,” says Saurav Jha, a postdoc at the Mila-Quebec Artificial Intelligence Institute, who was not involved with this work.

Additional co-authors are Wei Lin, a research associate at Johannes Kepler University; Eli Schwartz, a research scientist at IBM Research; Hilde Kuehne, professor of computer science at Tuebingen AI Center and an affiliated professor at the MIT-IBM Watson AI Lab; Raja Giryes, an associate professor at Tel Aviv University; Rogerio Feris, a principal scientist and manager at the MIT-IBM Watson AI Lab; Leonid Karlinsky, a principal research scientist at IBM Research; Assaf Arbelle, a senior research scientist at IBM Research; and Shimon Ullman, the Samy and Ruth Cohn Professor of Computer Science at the Weizmann Institute of Science.

This research was funded, in part, by the MIT-IBM Watson AI Lab.

Latest MIT News

- Darcy McRose and Mehtaab Sawhney ’20, PhD ’24 named 2025 Packard Fellows for Science and EngineeringMcRose, an environmental microbiologist, is recognized for researching the ecological roles of antibiotics in shaping ecosystems, agriculture, and health.

- MIT-Toyota collaboration powers driver assistance in millions of vehiclesA decade-plus alliance between MIT’s AgeLab and Toyota’s Collaborative Safety Research Center is recognized as a key contributor to advancements in automotive safety and human-machine interaction.

- MIT engineers solve the sticky-cell problem in bioreactors and other industriesTheir system uses electrochemically generated bubbles to detach cells from surfaces, which could accelerate the growth of carbon-absorbing algae and lifesaving cell therapies.

- Blending neuroscience, AI, and music to create mental health innovationsMedia Lab PhD student Kimaya Lecamwasam researches how music can shape well-being.

- Why some quantum materials stall while others scaleIn a new study, MIT researchers evaluated quantum materials’ potential for scalable commercial success — and identified promising candidates.

- Earthquake damage at deeper depths occurs long after initial activityWhile the Earth’s upper crust recovers quickly from seismic activity, new research finds the mid-crust recovers much more slowly, if at all.